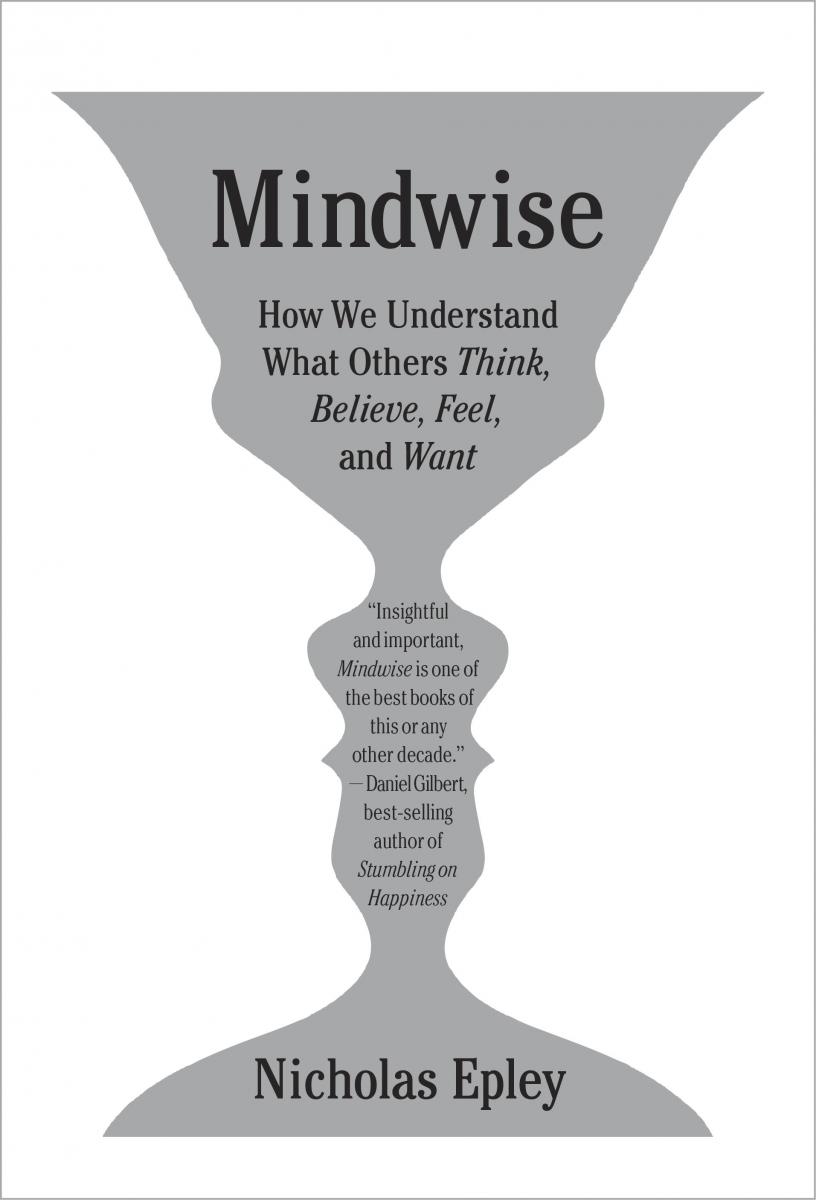

Mindwise: How We Understand What Others Think, Believe, Feel, and Want

Mindwise: How We Understand What Others Think, Believe, Feel, and Want

Mindwise: How We Understand What Others Think, Believe, Feel, and Want

by Nicholas Epley

Knopf, Borzoi Books (2014)

Summarized by Bryan Turner

Mindwise: How We Understand What Others Think, Believe, Feel, and Want is a book about our “sixth sense”, or mindreading, but there’s nothing supernatural about it. Epley is an experimental social psychologist, and this is a book about his research into how we understand the intentions, motives, thoughts, beliefs, feelings and wants of ourselves and others, our mistakes in doing so, and what we can do to correct for these predictable errors.

Why is this important? Because our sixth sense underpins our ability to cooperate. In the business world, cooperation is foundational for performance and profit. Anyone whose job rests, even in part, on successful interactions with others (cooperation) will benefit from this book.

The book is split into four parts. Part one is about how we misread minds. Specifically, that’s 1) how we understand less about our own and others’ minds than we’d expect and 2) what we can and can’t know about our own minds. Part two is about how we read minds when we shouldn’t, and we don’t read minds when we should. Part three is about mistakes we make (egocentrism, stereotyping and the correspondence bias) in understanding the minds of others. Part four is about what we can do to be better mindreaders (how we can be better at understanding people’s motivations, intentions, thoughts, beliefs, feelings and wants).

Annotated table of contents:

- Preface: Overview of the book.

- Part 1: How we misread minds.

- Chapter 1: We understand less about the minds of others, and our own minds, than we’d expect.

- Chapter 2: What we can and cannot know about our own minds.

- Part 2: Using our sixth sense when we shouldn’t and not using it when we should.

- Chapter 3: How we fail to read minds when we can and should, how it leads to psychological distance and the implications for cooperation.

- Chapter 4: How we read minds when we shouldn’t, the mistakes that creates and the implications for cooperation.

- Part 3: Mistakes we predictably make in using our sixth sense.

- Chapter 5: Egocentrism makes it hard for us to accurately read minds but there are things you can do about it.

- Chapter 6: We over rely on stereotypes and make predictable errors in mindreading. Again, there are things you can do about it.

- Chapter 7: Observed behavior does not equal someone’s intentions. The correspondence bias and its implications.

- Part 4: How to do better.

- Chapter 8: How to correct for, and avoid, all these predictable mistakes you make in mindreading.

CHAPTER BY CHAPTER SUMMARY

CHAPTER 1: AN OVERCONFIDENT SENSE

Did you know seeing someone for only 1/20 of a second is enough for you to form impressions about them that bias your assessment of them and how you interact with them? The problem is that, in many cases, those impressions are not accurate.

“We think we understand the minds of others, and even our own mind, better than we actually do.” We’re overconfident in our mindreading abilities and this makes us wrong a lot of the time for predictable reasons. Correspondingly, when dealing with colleagues, friends and even loved ones, we know a lot less about their minds than we think we do.

Fun fact: on average, mindreading between strangers was correct about 20% of the time whereas it was correct around 35% of the time between close friends and loved ones. In other words, we’re wrong about the minds of strangers four out of five times and we’re wrong about the minds of loved ones two out of three times. With that, you can see why one of the pieces of advice on our decision making page is to be more humble.

Some good quotes from the chapter are:

- “The central challenge for your sixth sense is that others’ inner thoughts are revealed only through the façade of their faces, bodies, and language.”

- “People’s ability to spot deception was only a few percentage points better than a random coin flip: people were 54 percent accurate overall, when random guessing would make you accurate 50% of the time.”

- “The problem is that the confidence we have in this sense far outstrips our actual ability, and the confidence we have in our judgment rarely gives us a good sense of how accurate we actually are.”

- “The main goal of this book is to reduce your illusion of insight into the minds of others, both by trying to improve your understanding and by inducing a greater sense of humility about what you know – and what you don’t know – about others.”

How to apply this to ethical systems design:

- Don’t assume you know why someone did something, or what motivates them – remember that you’re likely to be wrong if you guess. Ask them (more on this in chapter eight). This is especially important for managers and people who design systems that are supposed to align individual behavior with organizational goals.

CHAPTER 2: WHAT YOU CAN AND CANNOT KNOW ABOUT YOUR OWN MIND

“The only difference in the way we make sense of our own minds versus other people’s minds is that we know we’re guessing about the actual minds of others. The sense of privileged access you have to the actual workings of your own mind – to the causes and processes that guide your thoughts and behavior – appears to be an illusion.”

You know why you think and feel something right? Well, as it turns out, you don’t actually. We can’t see all the inner workings of our own mind and consequently when we try to decipher why we feel a particular way we’re really just guessing – the same as when we try to read the minds of others. This chapter is filled with witty anecdotes and examples that, if you read the book, will illustrate just what is meant by this.

Most people think this doesn’t apply to them (do you?) until they see the examples first hand (the planning fallacy bet, the elephant drawing, and George Bush examples are good for this) but the basic points are sound: introspection is limited, you guess when reading your own, and others’, minds and then you come up with rationalizations after the fact.

Our psychological heroes in this chapter are construction (or the limits of introspection), associative networks, the planning fallacy and naïve realism.

Some good quotes from this chapter are:

- “You are consciously aware of your brain’s finished products- conscious attitudes, beliefs, intentions, and feelings, – but are unaware of the processes your brain went through to construct those final products, and you are therefore unable to recognize its mistakes.”

- “You are missing the construction that happens inside your own brain: the triggers and intervening neural processes that make you do what you do and think what you think. We don’t understand ourselves perfectly well because we have access to only part of what’s going on inside our heads.”

- “What’s surprising is how easily introspection makes us feel like we know what’s going on in our own heads, even when we don’t. We simply have little awareness that we’re spinning a story rather than reporting the facts.”

How to apply this to ethical systems design:

- Associative networks: Pay attention to the environment and contextual triggers as we discuss on our contextual influences page, which incidentally, is managed by Professor Epley himself.

- Planning fallacy: you’re probably going to think that a particular project takes less time than it will. Make sure you allow sufficient time for a task; a big cause of unethical behavior is time pressure.

- Pay attention to naïve realism. “I’m right and you’re biased.” You won’t change your mind, or think rationally, weighing the best options and giving various alternatives sufficient attention, when emotionally aroused (in the heat of the moment), instead you’ll make a gut choice based on automatic processes and justify it. What to do to make better decisions? Slow down. Attain a little emotional distance and use that controlled processing that dismantles many of our automatic biases. Ask yourself “can I believe it?” and then “must I believe it?” which leads to very different frames of analysis to unearth your real preferences and do a better job on your decision making.

CHAPTER 3: HOW WE DEHUMANIZE

“You are describing psychological distance when you say that you feel ‘distant’ from your spouse, ‘out of touch’ with your kids’ lives, ‘worlds apart’ from a neighbor’s politics, or ‘separated’ from your employees.” This is failing to engage your sixth sense in action.

If you think it’s important to understand what motivates your employees, students or customers, then understanding how and when we engage, and fail to engage, our mindreading ability is important. That’s what this, and the next, chapters are about.

Studies show that managers think that they’re motivated intrinsically but that they think their employees are motivated extrinsically. As it turns out, this is wrong, and a big mistake for trying to understand how to motivate your people.

If you don’t understand people’s incentives then it’s hard to create incentive systems that are going to work well. Our mindreading abilities are remarkable but there are predictable reasons why you don’t engage them when you should. Disengagement comes from distance. Not surprisingly then, learning when to engage your sixth sense can help you gain insights you’d otherwise miss.

Some good quotes from the chapter are:

- “Every business leader is charged with getting things done through people. This requires understanding what actually motivates people in their jobs. This is an obvious mind-reading problem: What do my employees really want?”

- “Your sixth sense only works when you engage it.”

- “Like closing your eyes and then concluding that nothing exists, failing to engage your ability to reason about the mind of another person not only leads to indifference about others, it can also lead to the sense that other are relatively mindless.”

How to apply this to ethical systems design:

- If you find yourself thinking of others as extrinsically motivated, or relatively mindless, this could be a good reminder that you may have some psychological distance to bridge.

- Don’t make assumptions about what motivates your employees, customers or colleagues when you can know. Get perspective by asking them, as discussed more fully in chapter eight.

CHAPTER 4: HOW WE ANTHROPOMORPHIZE

Our brain’s default settings for engaging with the mind of another are not optimally calibrated. We make mistakes with seeing minds where none exist (this chapter) and we make mistakes by not engaging our sixth sense when we should (the previous chapter). Understanding what triggers us to anthropomorphize can help us see when we’re engaging our mindreading ability when it’s not advantageous to do so. The three triggers for engaging our sixth sense when we shouldn’t are 1) it seems like it has a mind 2) its behavior can be explained by having a mind and 3) it reminds me of myself and therefore has a mind.

Some good quotes from this chapter are:

- “If you and I can be tricked into seeing a mind where no mind actually exists, then the really interesting question is not whether some things really have minds or not but, rather, what are the tricks? These tricks matter because they help explain why people seem so completely inconsistent in their mind reading from one moment to the next.”

- “Making people feel lonely in experiments also at least momentarily increased their belief in God.”

- “Recognizing the mind of another human being involves the same psychological processes as recognizing a mind in other animals, a god, or even a gadget. It is a reflection of our brain’s greatest ability rather than a sign of stupidity.”

CHAPTER 5: THE TROUBLE OF GETTING OVER YOURSELF

“People using ambiguous mediums think they are communicating clearly because they know what they mean to say, receivers are unable to get this meaning accurately but are certain that they have interpreted the message accurately, and both are amazed that the other side can be so stupid.”

When trying to understand another’s mind, we start by thinking about how we feel about a situation which leads to more predictable mistakes in how we read minds. The two main classes of mistakes are the “neck problem” – where you and the other person pay attention to different things; think necks looking in different directions – and the “lens problem” – where your particular biases influence how you filter information; think lenses through which you see the world. The neck problem is that you and someone else may be paying attention to different things, thus preventing you from effectively communicating. The lens problem is that even if you are paying attention to the same thing, you and someone else will likely be evaluating that same thing differently. You can correct for the neck problem – by thinking about, or asking the other person, what they’re paying attention to relating to a given scenario – but it’s harder to correct for the lens problem.

Some good quotes from this chapter are:

- “You cannot simply try harder to view the world through the eyes of another and hope to do so more accurately, because the lens that biases your perceptions is often invisible to you.”

- “You don’t overcome the lens problem by trying harder to imagine another person’s perspective. You overcome it by actually being in that perspective, or hearing directly from some who has been in it.”

- “One consequence of being at the center of your own universe is that it’s easy to overestimate your importance in it, both for better and for worse.”

How to apply this to ethical systems design:

- The neck problem: when evaluating an issue, think about what others are paying attention to and what criteria they could be using. Use that to make sure you’re both paying attention to the same things.

- After correcting for the neck problem and ensuring you’re paying attention to the same things, get someone else’s perspective by actually putting yourself in their situation or asking them directly. Remember that perspective taking doesn’t work when trying to deal with the lens problem so for real insight you’ll need to experience the situation yourself or ask someone who is in it.

CHAPTER 6: THE USES AND ABUSES OF STEREOTYPES

Frequently the stereotypes we have about groups of people have some accuracy to them but we almost inevitably overestimate how much insight we actually get from the stereotypes. In a lot of cases, while there might be some insight, the differences between groups are marginal and we almost always exaggerate them. This makes sense based on how we process information; we emphasize our differences to create identity and experiences – not our similarities – and our brains do their best to find causal explanations even when none exist.

Importantly, we only rely on our stereotypes when we don’t have other good evidence to go on – after talking with someone for ten minutes our initial judgment based on stereotyping has pretty much vanished. Some stereotypes can be a good initial starting point in the absence of other information but remember we vastly overestimate the magnitude of the patterns we observe and the insights you can gain from them need to be only one part of a number of pieces of evidence.

Some good quotes from this chapter are:

- “We have too much insight from too little information from the stereotypes we rely upon. Relying on data we’ve ‘seen, imagined, or heard’ is not tremendously accurate.”

- “People primed with positive stereotypes about the groups they are in do better, while people primed to think the group they are part of has a negative stereotype do worse on tests.”

- “In general, stereotypes are more accurate when you’ve had direct experience with a group (such as one you belong to), know a lot about the group in question (because it’s in the majority), and are asked about clearly visible facts (such as about visible behavior rather than about invisible mental states, like attitudes, beliefs, or intentions).”

How to apply this to ethical systems design:

- When you notice yourself stereotyping remember that the insights you’ll get from stereotyping are small, that they don’t provide causal explanations and they only exist until you get to know someone or their work. If you’re relying on stereotypes, that could be a sign that you need to learn more about the person you’re stereotyping as what they do is generally more accurate than what you think about their group.

CHAPTER 7: HOW ACTIONS CAN MISLEAD

“Only a fool would infer that a person who slips on an icy sidewalk wanted to fall, but the contextual forces that contribute to our successes and stumbles are routinely less obvious than ice on a sidewalk.”

In a nutshell, the above quote is what this chapter is about. As users of this site will surely recognize, context matters – sometimes more than people’s personality or values – and it’s rarely as simple as observed behavior = people’s intentions.

Like many biases though, the correspondence bias – where we assume that observed behavior is a direct result of people’s intentions – is our default setting. We therefore naturally and mistakenly assume that we know much more about people than we really do. This can result in misevaluating situations, addressing the wrong questions and thereby trying to solve the wrong problems.

Some good quotes from this chapter are:

- “The problem is that life is viewed routinely through the zoom lens, narrowly focused on persons rather than on the broader contexts that influence a person’s actions.”

- “Like many habits, the tendency to make inferences about a person’s state of mind simply from their observed actions can be weakened intentionally; you can learn to overcome it.”

- “Much more effective for changing behavior is targeting the broader context rather than individual minds, making it easier for people to do the things they already want to do.”

- “Human beings, like any animal, are more likely to do things that are easy rather than hard. They don’t have some deep desire to litter; they’re just more likely to do whatever is going on around them.”

How to apply this to ethical systems design:

- Before you judge a particular behavior, whether good or bad, take a step back and ask yourself how the environment might have contributed. Like someone slipping on an icy sidewalk and inferring that the person wanted to slip, remember that the correlation between observed behavior and people’s intentions in many environments is low.

- Make sure you consider the environment when problem solving. Is the intervention you’re trying to promote really the right intervention to address the issue or is there something from the environment you’re missing?

- Additionally, if it is the right intervention, is it really a people problem or are you just trying to go against human nature? For the most part, people want to do the “right” thing, but we generally do the easy thing. Are you making the “right” thing, the easy thing? And if not, how can you?

CHAPTER 8: HOW, AND HOW NOT, TO BE A BETTER MIND READER

Part one talked about how we can and cannot know about minds. Part two talked about how we make mistakes by mindreading when we shouldn’t and not mindreading when we should. Part three talked about mistakes we make when mindreading. Part four, this chapter, talks about what to do and what not to do about it.

What doesn’t work for improving mindreading? Learning to read body language and improving perspective taking. Some people can get some gains on the margins from training in these areas but many of the improvements are negligible, even if they are overstated in the popular media.

Essentially, if you really want to understand what someone is thinking you’re going to have to ask them. What we know about our own minds is limited though so don’t ask them why they think something, ask them what they think. Then, because we so frequently misunderstand what’s said (because of the lens problem) reiterate what they said to you back to them to make sure you understand what they’re saying.

Some good quotes from this chapter are:

- Talking about reading emotions and thoughts: “Never have we found any evidence that perspective taking – putting yourself in another person’s shoes and imagining the world through his or her eyes – increased accuracy in these judgments. In fact, in both cases perspective taking consistently decreased accuracy.”

- “The relatively slow work of getting a person’s perspective is the way you understand them accurately, and the way you solve their problems most effectively.”

- “Others’ minds will never be an open book. The secret to understanding each other better seems to come not through an increased ability to read body language or improved perspective taking but, rather, through the hard relational work of putting people in position where they can tell you their minds openly and honestly. Companies truly understand their customers better when they get their perspective directly through conversation, survey, or face-to-face interaction, not when executives guess about them in the boardroom. Managers know what their employees think when they are open to the answers and employees feel safe from retaliation, not when managers use their intuition.”

How to apply this to ethical systems design:

- Don’t take perspective. Get it. When doing so, don’t ask about why people think a certain way (remember the pollster analogy) ask what they think. Then reiterate what they’ve said to make sure you’ve understood what they think.

- Many of the problems Mindwise highlights can be attenuated by switching from automatic to controlled processing. In other words, use the “rider” to put the brakes on the “elephant” to check a number of the cognitive biases discussed in the book.

The main psychological cast of characters from this book are:

- Construction (or the limits of introspection)

- Anthropomorphism

- Dehumanization and psychological disengagement

- Associative networks

- Egocentric biases

- Stereotyping (stereotype threat and priming)

- Neck problem

- Lens problem

- Planning fallacy

- Curse of knowledge

- Automatic and controlled processing

- Naïve realism

- Bystander effect

- Should and want selves

- Intrinsic and extrinsic motivations

- Social intuitionism

- Parochial altruism

- Spotlight effect